Today I learned that you can make strongly typed CLI flags using Go’s standard

flag package. flag is a package I’m fond of and I’ve written about it

before. I think the package is

underappreciated and harbours hidden depths.

You make strongly typed flags in Go by implementing the flag.Value interface.

One thing I really liked about this approach is that it gives you a contained

place to validate flag values without polluting main!

Let’s explore the idea by creating a strongly typed flag that selects between pretty logs (for when you are developing) and JSON logs (for production).

I’ve been reading Rust Atomics and Locks by Mara Bos. If you’re into low-level concurrency primitives, it’s a great book. The rest of you can leave now 🙃

Memory ordering is a place where Rust and Go diverge, and I think it’s illustrative of the difference in language philosophy. Rust provides a selection of memory orderings per atomic variable operation, whereas Go provides just one. Rust chooses raw performance whereas Go selects ease-of-use.

Let’s dig in.

I finally got to reading Paul Ford’s opinion piece in the New York Times, The A.I. Disruption Has Arrived, and It Sure Is Fun. I’ve long nodded my head to Paul’s essays, and this wasn’t an exception.

Especially this part near the end, which gels with my (naive?) hopes about AI reducing the amount of software suckiness in people’s lives:

I collect stories of software woe. I think of the friend at an immigration nonprofit who needs to click countless times, in mounting frustration, to generate critical reports. Or the small-business owners trying to operate everything with email and losing orders as a result. Or my doctor, whose time with patients is eaten up by having to tap furiously into the hospital’s electronic health record system.

After decades of stories like those, I believe there are millions, maybe billions, of software products that don’t exist but should: dashboards, reports, apps, project trackers and countless others. People want these things to do their jobs, or to help others, but they can’t find the budget. They make do with spreadsheets and to-do lists.

My industry is famous for saying no, or selling you something you don’t need. We have an earned reputation as a lot of really tiresome dudes. But I think if vibe coding gets a little bit better, a little more accessible and a little more reliable, people won’t have to wait on us. They can just watch some how-to videos and learn, and then they can have the power of these tools for themselves.

I hope we can, though what that means for the professional programmer, I don’t know. Clearly there’s a lot of software where “probably right” isn’t good enough, perhaps that is where we will re-find our niche.

I’m interested in how we can create software that’s malleable for people’s needs. I think AI can be a tool to achieve this.

Let’s talk about one aspect of that malleability: how things look. How can AI help with this?

Sadly, by default, AI output is a consistent “non-style”. Even Anthropic call it “AI slop”, suggesting breaking this mold by telling the model:

You tend to converge toward generic, “on distribution” outputs. In frontend design, this creates what users call the “AI slop” aesthetic. Avoid this: make creative, distinctive frontends that surprise and delight.

Later, Anthropic note that some hallmarks of this style are large border radius, system fonts, and (oddly specific!) purple gradients. I tested this out with a few prompts.

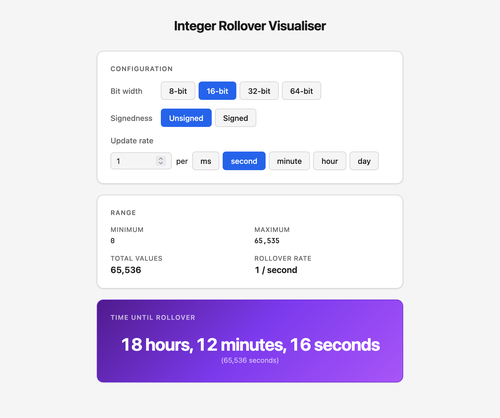

I quickly knocked up a demo app to show this. Its purpose is telling you how long a given size of integer will take to rollover, which can cause problems. But what we’re interested in is how it looks, which… isn’t great:

- Large border radius — check!

- System fonts — check!

- Purple gradient (🤷) — double-check!

But thankfully this is only what comes out of the model by default. With a little work, we can steer the model towards much improved designs.

So how can we bend the styling to our will? One way I’ve come up with is: write a Claude Skill that contains a design system using a style I like. Here’s the result:

Same layout, nicer design. At least for my tastes. But this approach is easily adapted to whatever your taste is!

Otherwise, read on for how I got the idea, and built it. It’s a bit of narrative rather than a guide, but it’s got lots of screenshots and prompts to inspire along the way.

My thoughts turn to the analogy of a car.

A car can travel at 140mph. But if I went that fast I’d drive into a wall. Driving at the maximum speed of the car is counter-productive. Instead, I have to remain at human-compatible speed.

AI codes at 140mph. I believe that’s not human-compatible. Perhaps we will find a way to harness that speed; to make it human-compatible. More likely we will find that certain tasks can go that fast, and others can’t. 140mph is okay on a race track but not on a back street.

We do not have to force ourselves to adapt to the speed AI can generate code at. We do not have to travel at theoretical maximums. Driving at normal speeds is still much faster than walking. In the same way, we can write code faster with AI help, even if that help is not at top speed.

If we don’t insist on top speed, AI code might even be better than we’d write alone.

Wouldn’t that be nice?